For the first time in history, technology is running ahead of the banking operating model. AI can handle 97% of every decision a bank makes. Faster, cheaper and more fairly. I have building these systems and I believe in them.

But there is a 3% that keeps me up at night. Loan denials with no alternative. Account freezes during family emergencies. Collections triggers firing three days after someone loses their job. The model does not know any of that happened.

This is not just an emerging market problem. It happens in rural Britain, in post-industrial America, in migrant communities across Europe.

an article by Bryan Carroll, Digital Banking Futurist, Strategy & Delivery

Her name was Linh.

She ran a small bánh mì stall on the edge of a busy market in the Mekong Delta, Vietnam. She had been banking with a digital lender for eight months. She made her repayments on time, every single time. She saved in small amounts. She was, by every measure, exactly the kind of customer financial inclusion was built for.

Then the floods came.

Her stall was damaged. Her stock was destroyed. She missed one repayment. Three days later, nobody called. A decision was made anyway. Her credit limit was reduced by 60%. Her loan application, submitted before the floods, was denied. No explanation. No call. No review. Just a push notification telling her the decision was final.

She thought she had done something wrong.

She had not. The model had simply done its job.

That is the problem I want to talk about.

The Case for Automation Is Real.

Let me be direct: I am not here to argue against AI in banking.

The guts of three decades helping build digital financial systems across Asia, Africa, and Europe has taught me what good automation does.

AI makes faster decisions, more consistent ones and in many cases, fairer ones. Research consistently shows that human loan officers in emerging markets reject qualified female and lower-income borrowers at measurably higher rates than well-trained models. Human bias is real, automation can help correct it.

This is not a theoretical concern for me. I am involved in the making of these systems and have often celebrated them. Which is exactly why I feel the weight of what I am about to say.

Not All Decisions Are Equal

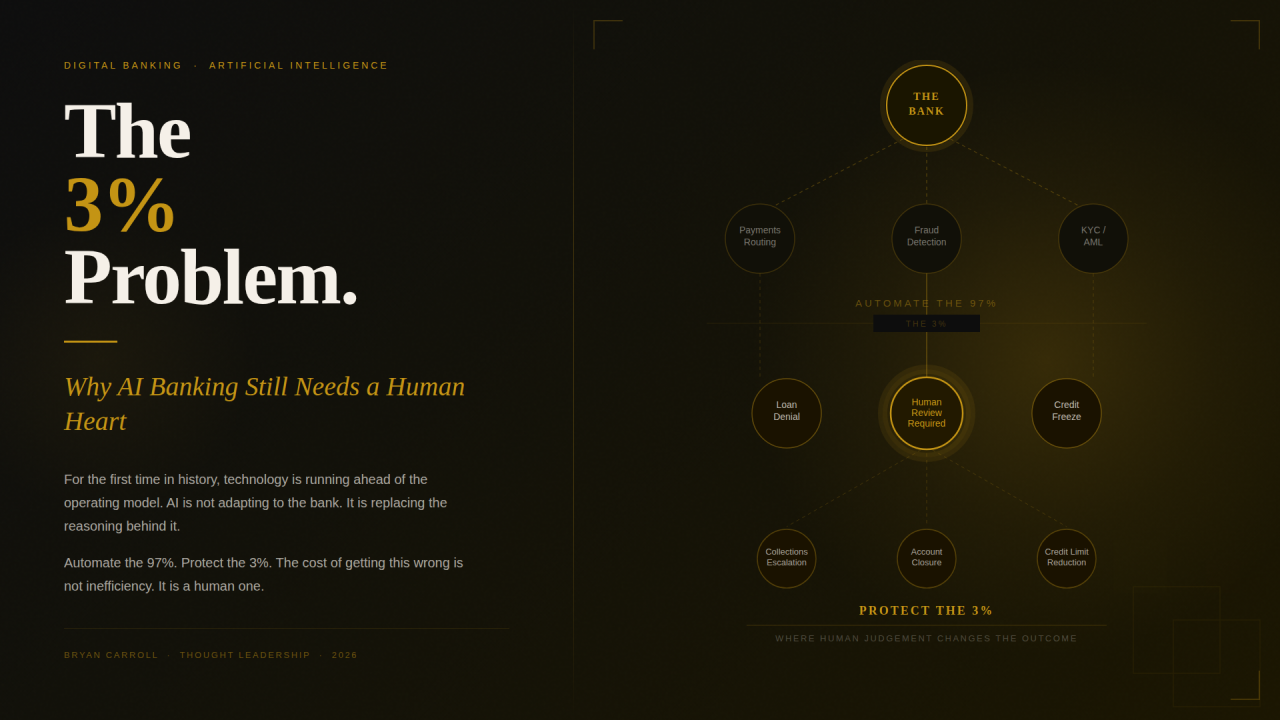

The efficiency case for AI automation covers roughly 97% of banking interactions.

Payment routing, interest calculation, fraud pattern detection, KYC document verification, chatbot query resolution, statement generation. Automate all of it. You will be faster, cheaper, and more accurate than any human team you could assemble.

There is something worth saying about why this moment is different from every previous wave of banking technology.

For the first time in history, technology is running ahead of the operating model. Every previous innovation, core banking, internet banking, mobile, adapted to the processes that already existed. This time, AI is not adapting to the bank. It is replacing the reasoning behind it.

When you map the Level 0 and Level 1 processes that make up universal banking, the foundational workflows that have governed how banks take deposits, extend credit, manage risk, and serve customers, the vast majority are rules-based, repeatable, and context-independent. They do not require human judgement. They require human execution, which AI now performs faster, cheaper, and more consistently.

That is where the 97% comes from. It is not a number I arrived at lightly. It is what you find when you actually look at the process architecture of a bank and ask honestly: where does human judgement genuinely change the outcome?

There is a 3% that is different.

A small, clearly defined category of decisions where the consequence to the human being on the other side is not inconvenient. It is devastating.

I keep coming back to five moments. Five points in a customer’s financial life where getting it wrong is not a service failure. It is a human one.

The first is loan denial in a financial desert. In markets where there is no alternative lender, no branch to visit, no human to appeal to, an automated denial is not a no. It is a full stop.

The second is account or credit freeze during a crisis. Fraud detection models are impressive. Their false-positive rates are not. When a legitimate customer’s only account is frozen during a typhoon, a hospitalisation, or a family emergency, the harm is immediate and acute.

The third is debt collection escalation. Automated collections triggers do not know that your borrower just lost their job. That their business burned down. That they are in hospital. The model sees days past due. It does not see the person.

The fourth is involuntary account closure. AML and KYC automation closes thousands of accounts every month across Southeast Asia, often with no explanation, no pathway to appeal, and no acknowledgment that for many of these customers, this was their first and only banking relationship.

The fifth is the one that keeps me up at night. Credit limit reduction during financial stress. The model sees rising risk. It protects the bank. The customer, already struggling, receives a push notification telling them that the lifeline they were counting on has just been shortened. The moment they needed their bank most is the moment the bank pulled back.

These are not edge cases in global banking and in emerging markets, they happen every single day.

The Accountability Vacuum

This is not new. In 2008, automated systems across Western markets pulled credit from millions of people at the exact moment they needed it most. We called it a financial crisis. For the families on the other end, it was something more personal than that.

We learned lessons. Then we built faster systems and forgot them.

Here is what worries me most.

When a human being makes one of these decisions wrongly, there is accountability. A face, a name. Someone who can be called. Someone who can say: I made this decision, and I got it wrong, and here is what we are going to do about it.

When an AI agent or algo makes it, accountability dissolves.

The model was trained on historical data, data that may itself contain the biases and exclusions of the financial system we were supposed to be disrupting. The vendor provided the engine. The bank approved deployment. A regulator somewhere issued general guidelines. Nobody signed the denial. Nobody looked Linh in the eye.

This is not just a moral failure. It is a liability that the industry has not yet priced in.

The numbers are not complicated. A wrongly automated decision in the 3% does not just cost a customer. It costs a complaint, a support escalation, a regulatory flag, a social media post, Aa closed account. In markets where acquisition costs are high and trust is fragile, that chain of events is far more expensive than the human review that could have prevented it.

The 3% solution is not a cost, it is an insurance policy. One with a very clear premium and a very predictable return.

The EU AI Act has classified credit scoring as high-risk AI under Annex III, requiring transparency, human oversight, and the right to appeal. Regulators in the Philippines, through BSP Circular 1160 implementing RA 11765, are moving in the same direction on consumer protection in digital finance. Regulators are finally starting to ask the right questions.

Regulation alone will not solve this, culture will.

What „Human in the Loop” Actually Means

I want to be precise here, because this phrase gets used loosely and it drives me a little crazy when it does.

It does not mean slowing everything down. It does not mean sending a loan officer to a customer’s home. It does not mean recreating the inefficiency we worked so hard to eliminate.

Let me tell you what it actually looked like when we tried to get this right.

When we built TNEX, Vietnam’s first digital bank, I made a decision that some of my team thought was unusual. We gave every one of our 1.7 million customers the direct email address of the CEO.

The reason was simple. I wanted to know when we got it wrong. I suppose in AI terminology I wanted to be the last human in the loop.

Some of those emails were hard to read, a few changed decisions we had already made. All of them made us better.

That experience, combined with what I witnessed on the ground in Sub-Saharan Africa and most recently here in the Philippines, shapes everything I believe about how this should be built. The names change, the languages change, the circumstances change. The pain does not.

So here is what I think getting it right actually requires:

Someone with authority needs to review the hard calls before they land on a customer. Not after a complaint, before. Flag the high-consequence decisions, hold them, route them to a human who has the power to act differently if the situation demands it.

When something significant is denied, the customer deserves a reason they can actually understand. Not a score, not a code, a sentence, ideally from a person.

Stop making vulnerable customers fight through a chatbot to reach a human. Build the escalation in from day one. Make it visible. „A member of our team will review your case within 24 hours” is not a concession to inefficiency. It is a product feature. I believe one of the most important ones you will ever build.

The humans who remain in these processes need to be better than the ones they replaced. More empowered, trained to recognise vulnerability. Trusted to override a model when the model is technically right but humanly wrong.

Efficiency and empathy are not in opposition. The job is knowing where each one belongs.

The Emerging Market Multiplier

Everything above is amplified in markets like Vietnam, the Philippines, and Sub-Saharan Africa.

These five moments are not unique to emerging markets. They happen in rural Britain, in post-industrial American towns, in migrant communities across Europe. The difference is not the type of harm, it is the depth of it. In the West, systems exist to slow the damage. Here, there is nothing between the automated decision and the person it lands on.

The safety nets that cushion bad automated decisions in developed markets often do not exist here. There is no second lender to go to, no savings buffer, often no family network with capital to lend. When a digital bank gets it wrong, there is often nowhere else to turn.

The Iran conflict is a reminder of how quickly global events translate into local pain. Fuel. food, transport. The cost of running a small stall in the Mekong Delta or a micro-business in Manila does not exist in isolation from what is happening in the Strait of Hormuz. When those costs spike, the first thing that suffers is the repayment. The model does not know that. A human would.

Something else matters enormously in these markets. This may be a customer’s first experience of formal financial services.

If that first experience is a cold automated denial with no explanation and no human face, we have not just lost a customer. We have confirmed every fear they had about whether institutions like ours were really built for people like them.

Trust is the foundation of banking. In emerging markets, that foundation is already fragile. One bad automated moment, experienced by enough people, shared by enough voices, can fracture it faster than any competitor ever could.

Access is not the same as dignity. We sometimes forget that.

A Question Worth Sitting With

I have been part of this industry for a long time. I have made my share of decisions I am proud of and a few I am not. That balance is what keeps me honest.

The longer I spend in this work, and the more Linhs I hear about, the more what is happening right now here in the Philippines cuts through me. Fuel prices are climbing, food costs are rising, families who were managing are suddenly not managing. The Iran conflict is not an abstraction here. It lands on a tricycle driver in Pasig, a sari-sari store owner in Cebu, a market vendor in Davao — people whose repayment capacity can shift overnight because of something happening ten thousand kilometres away in the Strait of Hormuz. When that happens, the model fires. The notification arrives, nobody calls. That is the warning I am writing about.

Before you automate the next consequential decision, ask yourself this:

If this goes wrong for the person on the other end, who is responsible? Can that person look the customer in the eye?

If the answer is nobody, you have not finished building your bank.

You have only finished building your efficiency.

The 3% is not a technical problem. It is a human one. It requires a human answer. The industry has the tools, the talent, and the capital to get this right.

What it needs now is the will.

Banking 4.0 – „how was the experience for you”

„To be honest I think that Sinaia, your conference, is much better then Davos.”

Many more interesting quotes in the video below: